Being visible on Google is essential in a market like Dubai, where consumers are continuously looking for goods and services online. It is a major source of leads, inquiries, and revenue. However, many companies ignore this fact: content and keywords are not the only factors that affect a company's Google ranking. It begins with whether search engines can even properly access and comprehend your website.

Googlebot becomes crucial in this situation. It is the mechanism in charge of finding, examining, and assessing your website prior to it ever showing up in search results. Regardless of how good your offerings are, your chances of ranking decline if Googlebot finds it difficult to traverse your website or understand your material.

Knowing how Googlebot operates gives you a useful advantage. In a cutthroat digital market like Dubai, understanding Google bot, enables you to find hidden technological flaws, enhance the accessibility of your website, and eventually boost organic traffic. This Googlebot guide explains why it begins with whether search engines can properly access and understand your website.

What Is Googlebot?

Googlebot, to put it simply, is the mechanism that Google uses to find and scan webpages on the internet.

Consider it akin to a digital inspector. It comes to your website, scans your content, and reports back to Google on the content of your pages. Google uses this information to determine whether and where your pages should show up in search results.

Your chances of ranking are greatly reduced if Googlebot is unable to access or comprehend your website.

How Googlebot Works: A Simple Breakdown

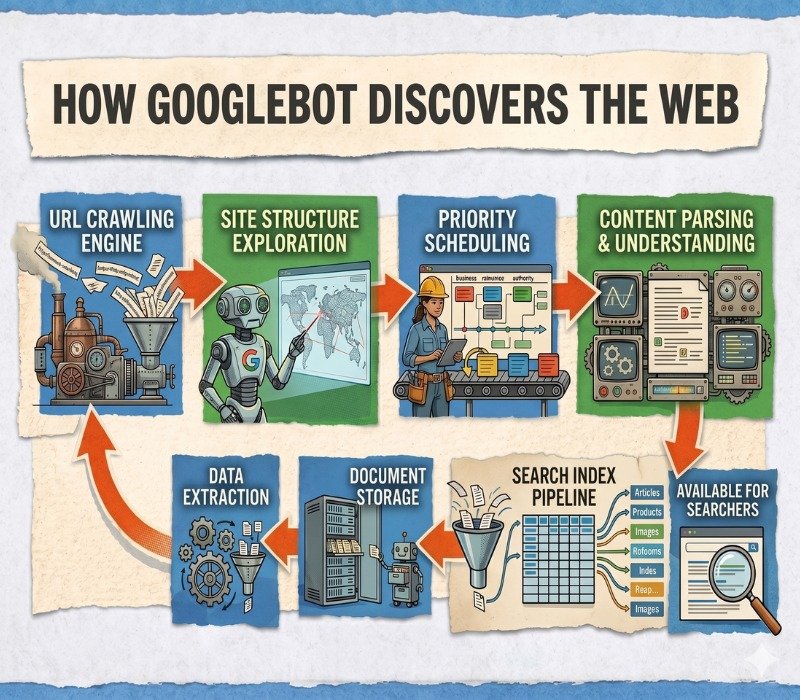

Googlebot follows a structured process before your content appears in search results. To understand how Googlebot works, let us take an example.

Imagine you own a retail store in Dubai Mall. Before customers walk in, someone needs to:

• Discover your store exists

• Enter the store without obstacles

• Understand what you are selling

• Decide if it is worth recommending to others

Googlebot follows a similar process. Googlebot follows a three-stage process to show your content in search results.

1. Crawling: Discovering Your Pages

Googlebot starts by finding URLs. It does this through:

• Links from other websites

• Internal links within your site

• Sitemaps you provide

If your pages are not linked properly, Googlebot may never find them.

2. Fetching: Accessing Content

Once it finds a page, it tries to load it. This includes:

• Reading text

• Loading images

• Processing code

If your website is slow, blocked or poorly structured, Googlebot may struggle to access everything.

3. Rendering: Understanding the Page

Googlebot processes your page like a browser. It interprets:

• Layout

• Content

• Structure

Modern websites often rely on JavaScript. If not optimized, this can prevent Googlebot from fully understanding your content.

4. Indexing: Storing Information

After processing, Google decides whether to store your page in its index.

• If your page is indexed, it becomes eligible to appear in search results

• If not, it is essentially invisible

Why Googlebot Matters for Dubai Businesses

Dubai is a highly competitive digital market. Whether you run a real estate firm, eCommerce store, restaurant, or service-based business, your audience is searching online first.

Here is the key point:

• If Googlebot cannot efficiently crawl and index your website, your SEO efforts will not translate into visibility.

Googlebot SEO Optimization Practical Tips

Here are simple actions you can take to improve how Googlebot sees your site. This section focuses on Googlebot SEO optimization.

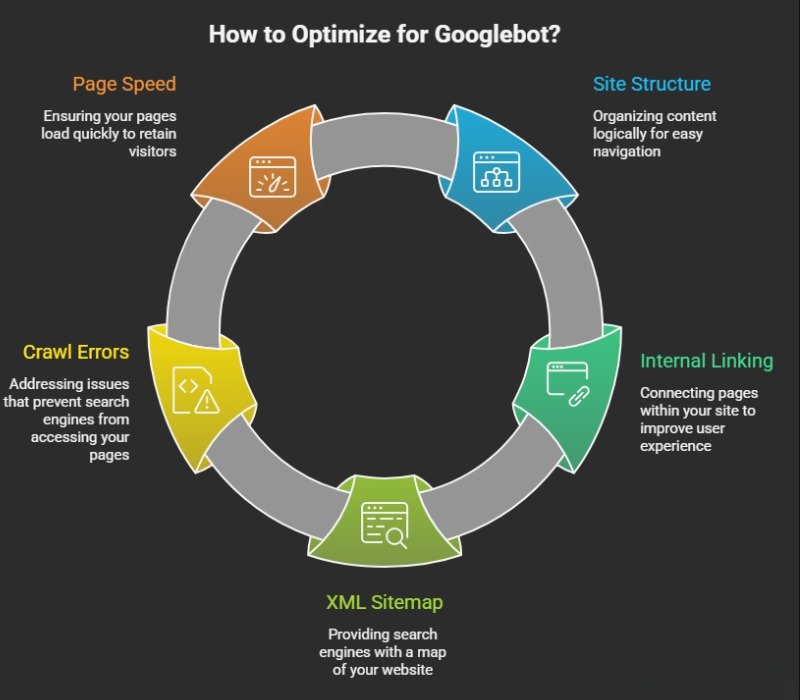

Improve Your Site Structure

Keep your site clean and easy to navigate.

Use clear menus

• Maintain logical page hierarchies

• Ensure every important page is easy to reach

• This helps Googlebot move through your site without confusion.

Optimize Your Internal Linking

Link your pages to each other in a natural way.

• Do not leave important pages isolated

• Use clear words in your links

• This helps Googlebot understand the connection between pages.

Submit an XML Sitemap

A sitemap helps Google understand your site. It shows:

• What pages exist

• Which ones matter most

This is helpful for new websites or large ones.

Improve Page Speed and Mobile Experience

A slow site can stop Googlebot from working well.

• Make your pages load fast

• Ensure your site works smoothly on mobile

Many users in Dubai browse on their phones.

How to Control How Googlebot Accesses Your Website

Not every page on your website needs to appear on Google. Sometimes, you may want to hide certain pages like admin areas, duplicate pages, or test content.

You can guide Googlebot using a couple of simple methods:

Robots.txt (Basic Control File)

This is a small file on your website that tells Googlebot which pages it should or should not visit.

Think of it like a “do not enter” sign for certain parts of your website.

For example, you can block:

• Private pages

• Thank you pages

• Duplicate sections

You don’t need to be technical. Most developers or SEO tools can set this up for you.

Meta Robots Tags (Page-Level Control)

If you want more control, you can give instructions directly on a specific page.

This tells Googlebot things like:

• “Show this page on Google”

• “Don’t show this page on Google”

This is useful when you want to control individual pages instead of the entire website. Robots.txt file controls access while meta robots tags control what happens after Googlebot accesses a page.

How to Check if Googlebot Is Visiting Your Website

A common question business owners have is whether Google is actually seeing their website. The answer lies in understanding how Google crawls websites and how often your pages are being accessed.

A common question business owners have is:

“Is Google even seeing my website?”

The good news is, you can check this easily.

1. Crawl Stats Report

Inside Google Search Console, you can see how often Googlebot visits your website.

This report shows:

How many pages are being crawled

When Google last visited your site

If your numbers are very low, it may mean your site needs better structure or visibility.

2. URL Inspection Tool

This is one of the easiest tools to use.

You simply enter your page URL, and Google tells you:

Whether the page is indexed

If Google can access it

If there are any issues

It is like asking Google directly, “Can you see this page?”

Where Most Organisations Commonly Make Mistakes

Many companies in Dubai spend a lot of money on branding, paid advertising, and website design yet neglect the technical aspects of SEO. This leaves a gap where individuals find the website remarkable, but search engines still find it challenging to fully access and comprehend.

Unintentionally blocking crucial pages using robots.txt or improper settings is a frequent error. By doing this, Googlebot may not even be able to view important portions of your website. Relying too much on visually intricate, JavaScript-heavy designs. They become difficult for search engines to understand.

Websites are frequently launched and then left abandoned for months. In addition outdated content and broken links alert Google that there is a possibility that the website is not being regularly maintained.

As a result, the website struggles to obtain awareness and visibility in the search engines. It is not in line with how Googlebot operates, no matter how good your offerings are to the customers online.

How SAAR Dubai Fits Into the Picture

Fixing these issues can be difficult without experience. This is where SAAR Dubai can help. We will review your websites from a Googlebot point of view, find any crawl issues and fix them so your site performs better in Google search.

Final Takeaway

Googlebot is the starting point of your visibility on Google. If your site is easy to crawl and understand, your chances of ranking improve. Focus on the basics.

Keep your site structured

Link your pages well

Make your site fast and mobile-friendly

Fix errors when they appear

In a market like Dubai, small improvements can create a big advantage. Businesses that get this right show up more often and turn that visibility into real growth.

Also Read: What technical SEO factors are most important for traffic growth?

FAQ

1. What is Googlebot and how does it work?

Googlebot is one of the many crawlers that are deployed by Google to scan and fetch the content posted in their search platform. It works in four steps: It discovers pages, loads them, understands the content and decides if they should appear in search results.

2. What are the different types of Googlebot?

There are primarily two types of Googlebot which are ‘Googlebot smartphone’ and ‘Googlebot desktop’. Google mainly uses the smartphone version, so your site must work well on mobile.

3. How can I optimize my website for Googlebot to improve rankings?

The different techniques to optimize your Googlebot to improve your organic rankings are by improving site structure, submitting an XML file, improving page speed and user experience and fix server errors. These help Googlebot crawl and understand your site better.

4. How long does it take for a website to appear on Google search results?

There is no definite timeline. It can take a few days to a few weeks. Faster results depend on good structure, proper linking, and submitting your site to Google.

5. Why is my website not showing on Google search results?

Common reasons include: your website is not indexed, pages are blocked, technical errors exist, Google finds your content weak or duplicated or the site is new or slow and will take time to get indexed by Google. Fixing these issues usually improves visibility.